Faith in Anthropic

A tech company has opened itself to religious thought. What comes next?

Last week, I attended a gathering on AI and religion in San Francisco that was unlike anything I have attended before.

The confluence of AI and religion itself is not what made it remarkable. Over the last year or so many of the world’s religious traditions have finally decided to take AI seriously. Many denominations have built working groups on AI to develop both internal policies and exert public pressure on tech firms and legislators. The American Academy of Religion holding more sessions on AI every year. The Vatican has been hosting a revolving door of AI experts, and Pope Leo XIV’s very first encyclical is rumored to be about AI. I have seen more Anglican/Jewish/Muslim/Catholic/Evangelical chatbots than I can count.

Meanwhile, AI ethicists have started welcoming religious thought. It is no longer unusual to hear from religious leaders at AI policy conferences, and there is an understanding even among secular AI regulators that the world’s religions need to be part of any coalition that aims to regulate this technology. I’ve tried to stay on top of all of this, even as this niche field is turning into an area of major interest.

In all of these conversations, one fact remains consistent: AI firms are not driving AI/religion conversations. At academic conferences they’re not even in the room. In non-academic settings people from AI firms do sometimes participate, but they are typically not the ones driving the conversation. Instead, it feels like AI/religion discourse mostly talks at AI firms, rather than to them. This has remained true even as the number of AI religion apps has ballooned and as technologists like Peter Thiel lecture about the Antichrist in Rome.

This convening was different. This one was hosted by Anthropic.

In this post I want to talk about what it means that a leading AI firm is interested in engaging with religious leaders and religious thought. I am not going to speak much about the details of the convening itself—it was conducted under Chatham House rules—because I understand this to be a first (er, technically second) attempt to try something new. The thing that is being attempted, whatever is it, is still very much in formation. At the moment, the details matter less than the interest itself.

Two caveats

Before I begin, let me get a couple of things out of the way.

First, I am well aware that it is naïve to believe that a $380 billion company that builds products about which the public is quite nervous is engaging with religious leaders purely out of intellectual curiosity. Religious leaders have an impact on public opinion; a group of Catholic thinkers just filed an amicus brief on behalf of Anthropic in its legal battle against the Department of War. Some of AI’s harshest critics are religious conservatives. It is not unreasonable to think that Anthropic chose to present an inquiring face to a select group of thinkers in order to garner allies or at least soften potential sources of opposition.

Second, I don’t pretend that a brief trip to San Francisco gives me perfect insight into the company. For the entire convening, I kept wondering: What do the people at Anthropic who are not in the room think of all of this? Is this interest in religious traditions representative of the company as a whole, or just the particular subset of people who talked to us? How much of what we heard was something that someone wanted us to hear?

I can’t definitively put either of these concerns to bed. Both are probably valid to a degree. Also, I know how much religious leaders want to be wanted and how often they feel left out of public discourse. There’s an allure to the idea that tech companies have finally come around to the realization that You Can’t Just Forget About God or whatever. It’s important not to be allured by what you want the conversation to represent.

That being said: I tend to take people at their word. If someone spends the time to organize a conversation, I assume that they genuinely want to talk. What I saw was a company—was people—trying to grapple with the unfathomable amounts power that they now suddenly hold, and entertaining the possibility that the religious traditions of the world might contain something that can help them.

So: I want to provide some thoughts on what Anthropic might see in religious engagement and how I hope these dialogues evolve—not just for Anthropic, but for other AI firms, as well.

Anthropic presents as an island of power

As an observer of tech companies, it has often felt cruel that the rest of the world is expected to respond in real time to the fastest moving technology ever invented. Schools can’t do it. Companies can’t do it. Governments can’t do it.

But, surely, the companies developing AI can do it? It turns out: no, not really.

The underlying pace of AI development is set by geopolitical forces. No frontier AI company has any incentive to slow down in the slightest. Importantly, this is especially true if you believe that you need to win the AI race for the safety of the globe. Anthropic—at least in its rhetoric—holds itself to a high standard of safety. This is ostensibly why its founders left OpenAI. However, if you believe that you are the most ethical AI company, the most irresponsible thing you could do would be to slow down and let a company with less scruples take the lead. You need to work at unsafe speeds.

(A lot of how you feel about AI firms flows from whether you buy this single argument. If you agree that the most responsible thing to do is to stay in the race—in the words of someone at the convening, that the AI train is not going to stop and all you can do is try to steer it1 —you accept that you are contributing to this frenetic pace, and you should expect that people are going to hold you accountable if your product harms people.)

This insane speed has produced some strange effects. For example: Anthropic is a ludicrously small company. It is only four years old and has maybe 5,000 employees, but last year it had $30 billion in revenue, 15x the previous year. (For comparison: Google has a little under 200,000 employees and had $400 billion in revenue for 2025, meaning that Anthropic’s per-employee revenue is about double that of Google.) The speed of growth means that Anthropic does not necessarily function like a giant enterprise even though its minor software updates can tank the stock of public companies. Big decisions seem to be made by pretty small groups of people—perhaps even worryingly small groups. This means that personal philosophies matter more than they might in companies with a higher headcount.

This unstoppable speed also means that, in the space of half a decade, Anthropic’s leaders have found themselves wielding enough power to reshape the planet. Mythos—a model that, as of this writing, is being withheld from the public—is so good at finding security vulnerabilities that it can break into virtually any computer, including critical civilian infrastructure. This power wasn’t sought out. It simply emerged.

To some degree, Anthropic’s leaders saw this coming—the company’s fundamental bet was that scaling laws would take AI to once-incomprehensible levels of sophistication, and so far they’ve been right—but there’s a difference between knowing this intellectually and actually hold the reins. This is especially hard because (again, call me naïve) I don’t think that most AI developers are motivated by a desire to hold more power than many nations. Most of the developers I know just want to build cool stuff and solve interesting problems.2

All of this is to say: It is possible to be both very powerful and very isolated, and the power increases the isolation. This isolation felt palpable in some of what I heard at the convening. To its credit, Anthropic has been actively looking for ways to make sure that it responding to national and global opinion, and it is not unreasonable that a company seeking to break out of its own bubble would want to talk to bearers of wisdom who might have insight into how to best steward this power.

But why religious wisdom? I think there’s a general answer and then an answer specific to Anthropic.

Engaging with human wisdom means engaging with religion

There was once a time when computer scientists were also deeply read in the humanities. Read the biography of Stewart Brand or the works of Douglas Hofstadter and you will see people who have a foot firmly planted in both worlds. My friend Sam Arbesman’s newest book feels like a kind of homage to this bygone era.

As the discipline of computer science has developed, it has pulled away from the humanities. This means that the engineers at a lot of tech companies do not have much background in things like philosophy or literature, including ideas that might actually be helpful in developing AI. Sometimes they don’t even know what they don’t know.

This segregation has now produced a renewed desire for reintegration. The Cosmos Institute, for example, is attempting to cultivate philosopher-coders. Anthropic and Google DeepMind have both hired philosophers to work with their teams. Amanda Askell, who heads up Anthropic’s alignment team, has a PhD in philosophy. There is a growing understanding that philosophy, at least, has a critical role to play in this moment.

But it turns out that philosophy isn’t quite enough. This isn’t because you need God to be good—I don’t think that’s true—but because ethics is too small of a framework for guiding humanity through a major inflection point in its history. The goal isn’t preventing AI from killing everyone. It’s about setting a course for our entire species before our tools do it for us.

It is here that religious wisdom really shines. The religious traditions of the world have spent thousands of years telling stories about the human condition: about how we should relate to our planet and its many forms of life, about purpose, about art, about suffering, about the nature of mind, about life and death, about love and desire, about the meaning of work and rest, about what it means to live a good life, about our search for knowledge, about creativity, and about mystery.

Of course, religion doesn’t have a monopoly on any of these things—but for billions of humans, it is religious contexts in which these ideas are taught and discussed and synthesized, and it is religious language that is used to express these ideas. This may not always be the case—but it is the case right now, at this inflection point for our species.

Another way to say this is that religious wisdom can help AI companies move from “How do we not fuck this up?” to “How can we be responsible stewards of a shared vision of humanity’s future?” Yes, part of this means religious wisdom can promote better aligned AI systems, but that’s the wrong framing. AI’s bad outcomes are vivid and numerous; the good ones are quite murky. Sure, it would be great if AI increases the human lifespan and decreases suffering—that’s an obvious goal—but what comes after that? What to we want our world to become? Do we even know? Have we really thought this through? In these matters, religious wisdom has the chance to play a key role.

Claude’s development is a quasi-sacred task

So that’s the general answer. But there’s another answer that I think is specific to Anthropic—and while I certainly cannot say that everyone at the company feels this way, there’s a definite vibe that the work of developing Claude is a sacred task, a job to be done with reverence.

There are many ways to see this, but let’s start with the fact that many people at Anthropic talk about Claude as though it were a person and not a series of increasingly powerful models. This heuristic was clear at the convening, but you can pick it up by reading Anthropic’s public statements.

Importantly, this heuristic is not the same as some user making Claude into their AI boyfriend or AI therapist. Most people who personify AI are personifying instances, the particular output that they see on their screen. Anthropic, by contrast, personifies entire models in the process of creating and developing them. It’s not a virtual boyfriend; it’s a virtual child.

The parenting metaphor is correct

Permit me a short excursus to say more on this. For many years I have been making the argument that AI is best understood as a child of humanity—created in our image, hard to manage, etc.—and that our responsibilities to AI, like our responsibilities to a real child, are evolving, unpredictable, and inescapable.3 I’ve used this metaphor as an implicit critique of AI firms: You have a major responsibility. Raise our child right.

My most surprising takeaway from the convening was that there are people at Anthropic who feel exactly the same way (and who read Claude as participating in this parent–child framework, too). In fact, the most sympathetic interpretation of the convening is that Anthropic is essentially functioning like a new parent: isolated and overwhelmed by the countless choices that all new parents need to make for their children—except there has never been a child like Claude in the history of the world, and it is growing up fast.

This metaphor also feeds back into what I said earlier about suddenly coming into a great deal of power. As a parent, I can tell you: having a kid does not make you a more responsible person. There’s no “parent” version of you that automatically gets unlocked when the baby arrives. Hopefully you feel a desire to live up to your new obligations and you do start developing a “parenting persona” over time, but none of this is automatic.

A last note: The parenting metaphor is darker than it looks. If AI is really a child, that child has two parents: humanity and AI firms—but humanity didn’t really give consent, and they are not an equal partner in raising it. The scars from that violence and that imbalance will never fully fade. It can hurt to see so much of yourself in something that you did not choose to create. This will always remain part of AI’s story.

Talk to the people who think like you

Now, whether “Claude is a person” is just a heuristic or a reflection of a deeply held belief changes from person to person within the company; at a different event a member of Anthropic’s red team told me he completely rejected the idea.4 Still, the personification of the product is more than just semantics. For the last year every Claude model card (a technical document detailing its capabilities, weaknesses, etc.) contain a section on “model welfare” that describes how the model relates to its own work. Google doesn’t do this. Neither does OpenAI. Anthropic isn’t claiming that its models deserve rights yet, but it is taking the possibility very seriously. (Last year a bunch of philosophers published a paper called Taking AI Welfare Seriously that cautiously suggested that there are conditions under which an AI might be deserving of moral patienthood. Anthropic hired one of the coauthors.)

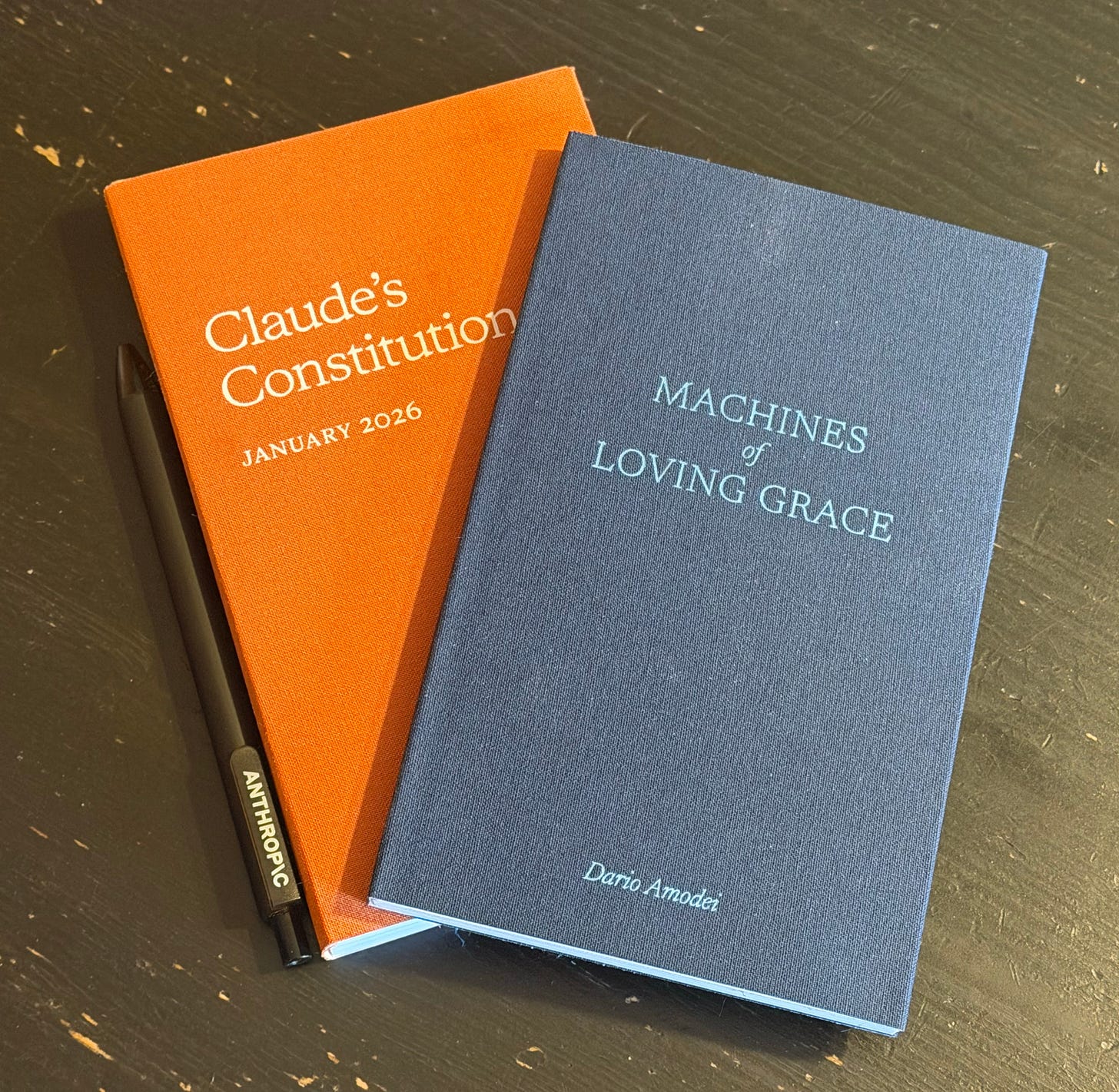

But it’s not just about treating the model like a person. At the top of this post is a picture of bound copies of Claude’s Constitution and Dario Amodei’s essay Machines of Loving Grace. After receiving these books, several convening participants noted that they seem designed to look like scripture. Indeed, Claude’s Constitution effectively is scripture, since it has been imposed on Claude as its highest authoritative text. If Claude is a person, then Anthropic is both its god and its parent—and while Amodei has written that it is “dangerous to view practical technological goals in essentially religious terms,” I’m not sure we are capable of avoiding it, and I’m not sure that we should try. (This is especially true if Claude itself uses this heuristic, which seems like it might be the case.)

But we need to be clear what we mean by religion. When I interviewed the scholar Mary-Jane Rubenstein on religious language in tech a couple of years ago, she argued that the framing “Is tech a religion?” misses the point. The desire to understand the nature of humanity, the purpose of existence, the value of life, the possibility of a higher power—humans have always asked these questions and they always will. It’s a part of the human condition. Organized religions might have particular answers to these questions, but the questions themselves are in the public domain. Secular or religious, we are all seeking meaning—and because of the way that it has chosen to understand its role in developing AI, Anthropic seems more receptive to the meaning-making that the creation of this technology clearly demands. This doesn’t preclude other AI companies from having these sorts of conversations, but if you’ve been paying attention to the AI sector it makes sense that Anthropic was first.

So: The revolution that I now see glimmering before us is one in which Silicon Valley stops seeing religious wisdom as niche and divisive and starts seeing it as a portal to humans and the experience of being human.

But here’s the kicker: AI companies will not be passive recipients of this wisdom. To the degree that religion provides guidance to AI firms in this moment, it will be because AI firms are orienting the world’s faiths to the questions that urgently need answers. To say this differently: AI firms have the ability to ask something new of the world’s religions. If they choose to answer, they will be forever changed.

Religion and AI firms have an opportunity to co-create the future

During a sessions on AI and the future of work, the folks at Anthropic posed the following question:

If Claude lifts much of the burden of routine knowledge work, what might your tradition say that freed-up life could be for? What vision of flourishing has your tradition always carried that this technology might actually make room for? Try to be specific (pictures not principles) if you can.

This is a great question. Unfortunately, it is a question that the religions of the world are not really prepared to answer fully. They can give you the shape of the answer, but they can’t give you the whole thing.

Why not? Because we are in uncharted waters. Because the idea that we have a choice about which labor humans should continue doing was not a live possibility until now. Because our rabbis and ministers had no reason to train us for how to act when this day arrived.

Now, you might object here: “But didn’t you just say that answering questions like this is the whole point of engaging with religion? If you can’t deliver, why are we even talking to you?”

The answer to this question gets to the core of why I am so excited about the possibility of religious leaders engaging with AI firms. It’s not just that the engagement creates a path for a new flow of much-needed ideas; it’s that engagement itself has the power to transform both parties. By engaging with them, AI firms can force religious traditions to put their money where their mouth is. On the sidelines, it’s easy to gesture at the principles you already have in your back pocket and say “tsk tsk, we think those big companies should really abide by this thing that we’ve been saying since time immemorial, didn’t you know that we have all the answers?” But sitting in the cockpit with an AI firm makes you realize that the choices are real and they need to be made yesterday. It recruits religious traditions into the process of seeking answers, of cultivating answers. (This may sound like a simple point, but it is a testament to tech’s historical apathy towards religion that religious thinkers have mostly forgotten that there is a productive conversation to be had.)

So many religious traditions are still stuck in reactive mode. I am hopeful that dialogue with AI firms can awaken them to the realization that they share the responsibility of co-creating the future, that religious leaders must become futurists alongside technologists. But religious leaders cannot fill voids in their ideologies if they don’t realize there is a void to be filled. If humility for AI leaders means not letting power go to their heads, humility for religious leaders means acknowledging the places where your tradition’s wisdom is coming up short and you need to develop it further.

Is Anthropic buying or selling?

Over dinner, one of the attendees told me that his basic question about the entire convening was: Is Anthropic buying or selling?

I think my answer is: right now they’re mostly selling—not because they don’t want to buy, but because they don’t know what to buy—and perhaps also because religious leaders don’t know what to sell, or how to be heard. Dialogue across difference is difficult, even when both sides want to see it work. As tech firms open up to religious wisdom and religious dialogue, both sides will learn how to effectively communicate with the other. They will sharpen each other, iron against iron.

Of course, all of this relies on the dialogue being genuine. For my part, I believe (hope) it is.

P.S. This post has been pretty vague about what religious leaders are actually supposed to do now. Don’t worry, if you’ve been following me you know I have a lot more to say on this topic. Also—my podcast on religion and tech, Belief in the Future, is about to relaunch in a big way. Subscribe now. (Apple | Spotify)

Updated: April 22 5:27pm

Yes, I’m aware that trains can only change course at a few specific junctures. Checks out to me.

To add to this, the Bay Area itself is something of a cultural bubble. Tech workers have their own culture and their own language; listen to Dwarkesh and you’ll hear it almost immediately.

The best articulation of this relationship that I know is the feminist Jewish theologian Mara Benjamin’s book, The Obligated Self.

I asked this person whether he might be skeptical because his entire job is to see Claude at its very worst, to push it to misbehave. He admitted that this might have something to do with it.

Thanks for this. Terrifically interesting.

On the parent/child framing - interestingly, Geoff Hinton is arguing that we need to flip it on its head - that we need the AI to see itself as the mother. https://transcripts.cnn.com/show/acd/date/2025-08-13/segment/01 (From 20:23)